ChatGPT, the revolutionary language capable of generating human-like text, has undoubtedly amassed the world's attention. Yet, beneath its slick surface lurks a darker side, one filled with ethical dilemmas. While ChatGPT delivers undeniable benefits, its potential for misuse is a grave concern.

- One pressing issue is the risk of generating false information, which can propagate rapidly and weaken trust in reliable sources.

- Moreover, ChatGPT's ability to write persuasive content can be weaponized for detrimental purposes, such as propaganda campaigns.

- Finally, the absence of transparency surrounding ChatGPT's processes raises doubts about its bias and potential to reinforce existing societal divisions.

It is imperative that we tackle these issues head-on, by promoting conscious development and application of AI technologies like ChatGPT. Only then can we harness the potential of AI for good while minimizing its negative side.

The Perils of ChatGPT

While ChatGPT presents remarkable leap in artificial intelligence, its widespread adoption raises pressing concerns. The technology's ability to generate convincing text can be exploited for malicious purposes, such as creating fake news. Moreover, ChatGPT's lack of real-world understanding can lead to erroneous outputs, potentially causing confusion. As we explore this new era of AI, it is essential to address these challenges proactively.

One such peril lies in the potential for ChatGPT to enable the creation of malicious content on a massive scale. Its ability to generate convincing text can be utilized by hackers to create deceptive scams that dupe unsuspecting victims.

Another concern is the potential for ChatGPT to reinforce existing biases. As a language model, ChatGPT's understanding is based on the vast collection of text data it was trained on. If this data contains biased information, ChatGPT may reproduce these biases in its outputs, reinforcing harmful societal norms.

Furthermore, the accessibility of ChatGPT raises concerns about its potential for misuse by individuals with harmful goals.

It is essential to establish safeguards and moral frameworks to counteract these perils and ensure that ChatGPT is used for constructive purposes.

Unveiling ChatGPT's Risks: A Deep Dive into Potential Harms

While ChatGPT and other large language models (LLMs) offer exciting possibilities, it is crucial to acknowledge their potential for negative consequences. These powerful tools can be abused for malicious purposes, ranging from generating fake news to creating scam emails. Moreover, LLMs can perpetuate existing biases present in their training data, leading to inequity. It is imperative that we develop robust safeguards and ethical guidelines to mitigate these risks and ensure that LLMs are used for beneficial purposes.

To address these concerns, it is essential to allocate resources in research on LLM safety and explainability. This includes developing techniques to flag malicious use cases, mitigating discrimination, and promoting responsible development and deployment of LLMs. A multi-stakeholder approach involving researchers, developers, policymakers, and the general public is crucial to navigate the complex ethical challenges posed by ChatGPT and similar technologies.

Expose ChatGPT's Flaws

A recent surge of scathing reviews has exposed some significant problems within ChatGPT, the popular {AI{ chatbot. Users have voiced frustration about the chatbot's tendency to generate inaccurate information, as well as its failure to interpret complex questions. , Additionally, some reviewers have lambasted the program's awkward style. While ChatGPT remains a promising tool, these critical reviews serve as a warning that {AI{ technology is still under development and requires continued improvement.

Is ChatGPT a Threat?

The emergence of ChatGPT, a powerful artificial intelligence chatbot, has sparked debate about its potential impact on society. While ChatGPT offers exciting possibilities for automation, concerns have been raised about the dangers it implies.

One major concern is the possibility of ChatGPT being used to create misinformation, which could damage trust in institutions. Another issue is the impact ChatGPT could have on jobs, as it may eliminate certain roles.

Furthermore, there are concerns about the responsible use of ChatGPT. For example, its ability to generate realistic text could be misused for harmful purposes, such as impersonation.

It is essential to approach ChatGPT with both awareness and exploration. While it holds great promise, it is read more crucial to mitigate the risks it poses.

Policies are needed to ensure that ChatGPT is used ethically and responsibly, and informing the public about its capabilities and limitations is essential.

Beyond the Hype: The Downside of ChatGPT

While ChatGPT has captured the public imagination with its impressive language generation abilities, it's essential to recognize the potential downsides. One major concern is the risk of generating biased or incorrect information. As ChatGPT is trained on a massive dataset of text and code, it can emulate societal biases present in that data, leading to unfavorable outcomes.

Furthermore, over-reliance on ChatGPT could hinder critical thinking and creativity. By providing quick and simple answers, it may deter individuals from researching information extensively on their own.

Finally, the philosophical implications of using AI-generated text are still unclear. Questions surrounding authorship, plagiarism, and the possibility for misuse require careful evaluation.

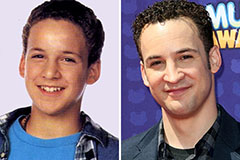

Ben Savage Then & Now!

Ben Savage Then & Now! Romeo Miller Then & Now!

Romeo Miller Then & Now! Susan Dey Then & Now!

Susan Dey Then & Now! Kerri Strug Then & Now!

Kerri Strug Then & Now! Naomi Grossman Then & Now!

Naomi Grossman Then & Now!